This talk will show-case how to build an environment to set up your services (e.g. apache webservers, KDCs, ...), load balancing, routing and monitoring from a simple service configuration. Some lines of YAML and the power of Python are the source for generating configuration for HAproxy, bird (route reflection and anycast nodes), apache2 and Icinga2.

Keeping your service configuration aligned over hundreds of hosts is not a simple task. In this talk, we illustrate how we automated the integration of HAProxy into our infrastructure at University of Paderborn.

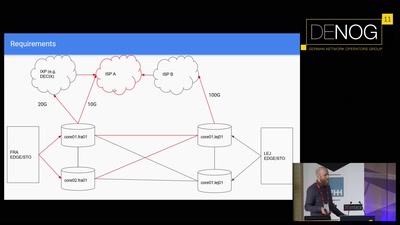

As our current generation of commercial load balancer appliances approached end of life, we thought about replacement options and improving how we manage our services while being at it. The main goal was building a scaleable, consistent, active-active setup of load balancers which could be easily automated with open source tools.

We needed a way to define what a service is and how/where it should be configured, balanced and monitored we created a simple service defintion format in YAML and small Python library to help with parsing, inheritence, defaults etc. The automation framework bcfg2 was a given as it was already in use to manage hundreds of Linux and Windows systems and services. As it's written in Python it's easily extendable.

As load balacing options we implemented anycast (for examples for Kerberos KDCs) as well balancing by HAproxy nodes where the HAproxy frontend IPs might be anycasted as well. When running production services it's important to know when things break before the user does, so setting up monitoring for frontend and backend services is part of the picture, too. All bits of configuration for HAproxy, anycast, route reflection, monitoring with Icinga2, netfilter (nftables) rules, etc. are automagically generated based on the service configuration. This talk will lay out how all those parts fit together and are generated.

Of course, we also explain the pitfalls of this setup and what we (hopefully) learned from it.