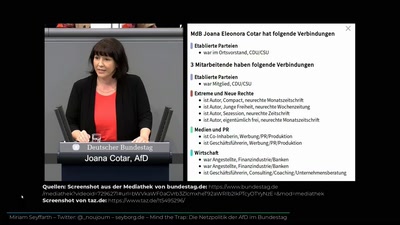

Bei gleicher Qualifikation werden Männer bevorzugt - Algorithmic Bias & der österreichische Arbeitsmarkt

As algorithms are involved or even solely responsible for more and more decisions in our daily lives, there is more and more discussion about algorithms being unfair, biased, even straight-on discriminatory.

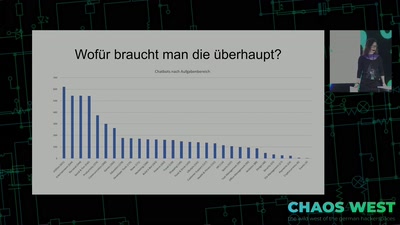

The Austrian Public Employment Service (AMS) announced in October that they would start using an algorithmic system (in short, every-day language "algorithm") to decide who will receive more (or less) benefits and support from their system. First analyses in the days after the announcement already predicted that the system would be discriminating against people who are already marginalise

In this talk I want to give people a better understanding of why and how algorithmic systems are biased and how people are trying to solve problems these biases cause. Specifically, we will see how data collection and interpretation, model creation, problem definition, definition of success and even the design of outputs can impact algorithmic systems – or improve justice and equality, if proper thought and care is put into designing them.

As a computer science student, students' rights activist and privacy activist, I have worked on the topic of algorithmic bias at various institutions and with different groups of people.

A one-page article I co-authored on the topic of algorithmic bias aimed at students in Austria can be found at Progress magazines' website.

A write-up for the masters' course "Critical Algorithm Studies" at TU Wien can be found on my blog. It discusses which current issues in algorithmic systems are, in my opinion, the most urgent ones.